There’s nothing dumber than artificial intelligence.

And there’s probably nothing right now that makes you dumber than artificial intelligence.

What are we talking about here?

To simplify things: artificial intelligence systems using conversational agents like ChatGPT, Gemini or Claude for instance rely on the instantaneous collection of data across the Internet and the immediate production of a synthesized response.

This process poses several intrinsic problems.

First, conversational agents only have access to a tiny fraction of knowledge on any given question, since they can only reach data available online. They have no access to content behind paywalls (such as online newspapers) or to material protected by copyright (the world of publishing, for example). In other words, chatbots rely on knowledge that is often superficial, fragmentary and incomplete, because the Internet itself is a pale reflection of the knowledge that has not fallen into the public domain and that exists in theses, dissertations, documentaries – in short, in books.

The same is true for fictional or literary domains. If, for example, you ask ChatGPT for an in-depth analysis of Jane Austen’s Pride and Prejudice, chances are you’ll be left unsatisfied – unlike if you opened the French La Pléiade edition devoted to the author, with its studies and notes.

Second, conversational agents provide answers based on the theory that dominates a subject – algorithmic logic obliges – even though plagiarism and the endless repetition of narratives are rampant online. This leaves little room for exceptions, divergent voices, nuance, or subtlety and it becomes highly problematic when dealing with complex topics.

Third, the answers produced by these chatbots are sometimes poorly sourced, sometimes misattributed, sometimes entirely fabricated – that is, without any source at all. ChatGPT will never admit that it doesn’t know; it will invent sources. I tested this with my beloved teen-who-is-now-a-young-woman: I asked ChatGPT a rather technical question in law (I’m a Doctor of Law and a lawyer), and my beloved teen-who-is-now-a-young-woman, who’s a PhD student in gender studies, asked a complex question in sociology. Both of us received references to texts we’d never heard of – and never found afterward. You might think these gaps stem from the dominance of English in training data? Not so: I asked my question in French, and my daughter asked hers in her other native language, English. These are what is called AI hallucinations: the tone sounds credible, but the substance doesn’t exist.

Fourth, the number of articles generated by artificial intelligence has now become roughly equivalent to the number of human-written articles online, according to an October 2025 report from the SEO firm Graphite. A 2022 Europol report predicted that 90% of online content would be AI-generated by 2026 following ChatGPT’s release. Even if we’re not quite there yet, it’s delusional to think that AI-generated content won’t soon vastly outnumber human-created material.

The truth is that artificial intelligence operates in a closed loop, feeding on itself. The models behind conversational agents are being repeatedly trained on outputs from other AI models, producing content that is increasingly imprecise and unreliable, as they reuse their own productions and amplify their own errors or approximations. The phenomenon, studied by Stanford and Oxford, has been dubbed model collapse, and, unsurprisingly, it will show no sign of stopping, since the Internet is being flooded with AI-generated material.

As I was saying: there’s nothing dumber than artificial intelligence.

Where a search engine offers you thousands of entries on a topic, forcing you to research, compare, assess, reason, and form your own opinion – conversational agents do that thinking for you, providing a ready-made opinion with little nuance or depth, if not outright errors.

Or bias. Their responses can be influenced by economic, political, or geostrategic interests – and the true masters of information of Tomorrowland will be those who can control the entire chain of narratives, from the creation of information to its reproduction within AI models.

The U.S. startup NewsGuard, which monitors online misinformation, regularly warns about the proliferation of unreliable, AI-generated news websites spreading false or State-sponsored content on topics ranging from politics to technology, entertainment, and travel. Such propaganda was flagged in 2025 from Russia and China.

UNESCO, for its part, warned as early as March 2024 about the spread of racist, sexist, and homophobic stereotypes within Meta’s and OpenAI’s language models – subtly shaping the perceptions of millions and paving the way for the replication of those biases in real life.

Artificial intelligence thrives because we are lazy. AI makes us dumb – but the reality is, AI thrives because we humans have been dumb enough to play sorcerer’s apprentices without an ounce of responsibility.

AI thrives because we live under a logic of profitability where the least effort, combined with the greatest speed, serves a capitalist model of minimal cost and maximal productivity.

Deloitte, one of the world’s four largest audit and consulting firms, one of the Big Four, found itself at the heart of a massive scandal in August 2025 for having delivered a report entirely written by artificial intelligence – and, of course, sold for a fortune. Commissioned in 2024 to produce a report for Australia’s Ministry of Employment for the modest sum of €260,000, Deloitte delivered in July 2025 a document not written by human experts but generated by AI, full of fabricated citations, references to non-existent scientific articles, and court rulings that simply did not exist. The Australian Ministry of Employment caught Deloitte red-handed and, unsurprisingly, demanded a partial refund of the fees.

The reality is that knowledge, in general, lives in books. It’s by slogging through theses, dissertations and books on a given subject that one can form a valid opinion. It’s by taking the time to absorb contradictory information that one can form a valid opinion.

The reality is that creativity, in general, lives in the human mind. It’s by thinking deeply about a topic that we discover unexplored solutions – because the human mind possesses real intelligence, but also instinct, soul, heart, and body. And it’s precisely that combination that determines whether a solution is viable.

That an entire humanity would base its opinions on the answers produced by conversational agents is profoundly worrying. The risk, in the years to come, is a rapid drying up of knowledge, understanding, and human intelligence – intellectual as well as emotional.

The division of humanity, already well underway, will only deepen – each person retreating behind opinions stripped of nuance, human understanding and social connection.

The isolation of human beings, already well underway, will only deepen – each person escaping into an over-stimulating and over-entertaining digital world, devoid of human understanding and social connection.

The phone will likely disappear, replaced by glasses (Meta), implants (Musk) or headsets (Apple) that will eliminate the visible interface of the smartphone in favor of invisible, personalized, interactive digital layers embedded in the real world.

Humanity is handing over the reins of its decision-making capacity to artificial intelligence, with no real oversight, no regulation. Black Mirror isn’t far off, and that’s rather alarming.

As I was saying, there’s probably nothing right now that makes you dumber than artificial intelligence.

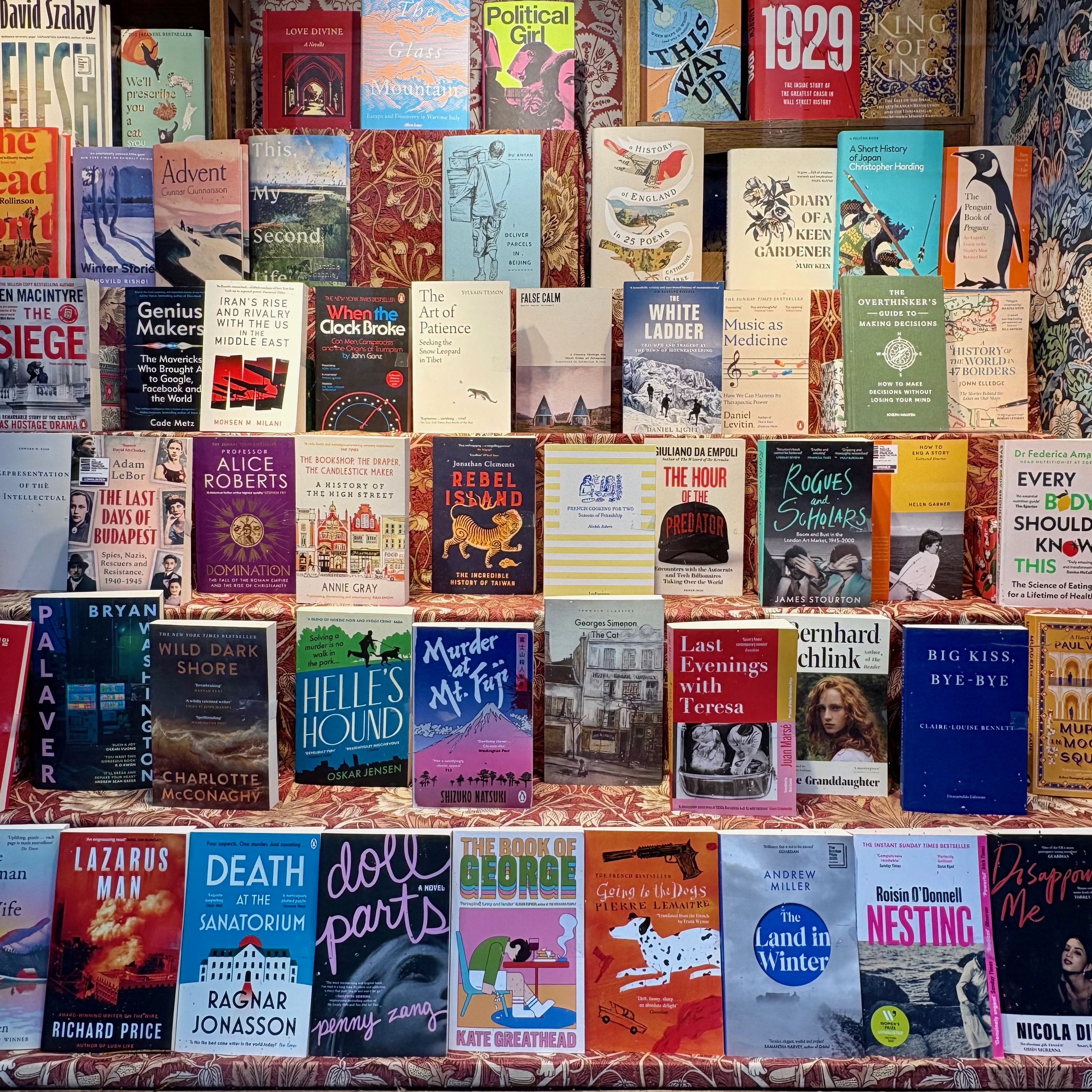

Editor’s note. Here I am, at Daunt Books, Marylebone, London. As I write this, visual-content generation tools are becoming increasingly sophisticated, making the distinction between a real photograph and an AI-generated one extremely difficult, if not impossible. When it comes to digital fashion and influencers, social media are about to be flooded with supposedly perfect photographic content presented by digital avatars based on real people, yet devoid of rough edges, humanity, or any real depth. It is highly likely that this will repulse and drive away a large portion of social-media consumers (because yes, it’s a form of consumption) on platforms such as Instagram or TikTok. You will never see AI-generated photos here. Considering that I publish one article per week and that this naturally requires a bit of work, it would certainly be easier to generate photos with a prompt on Nano Banana. I refuse to do so on ethical grounds: it lacks reality, truthfulness, authenticity, and alignment. Moreover, it is far more interesting – and much more fun – to search for and find the right location and outfit to create the photographs that illustrate each article.

January 23, 2026